You can apply TAK-ML ideas to a PCAP ingress pipeline — but only if you’re disciplined about where it sits and what authority it has. Otherwise you risk silently destroying evidence or biasing your dataset in ways you can’t unwind.

The right framing is TAK-ML as a relevance triage layer, not a drop gate.

Let’s get precise.

What “TAK-ML” really means in this context

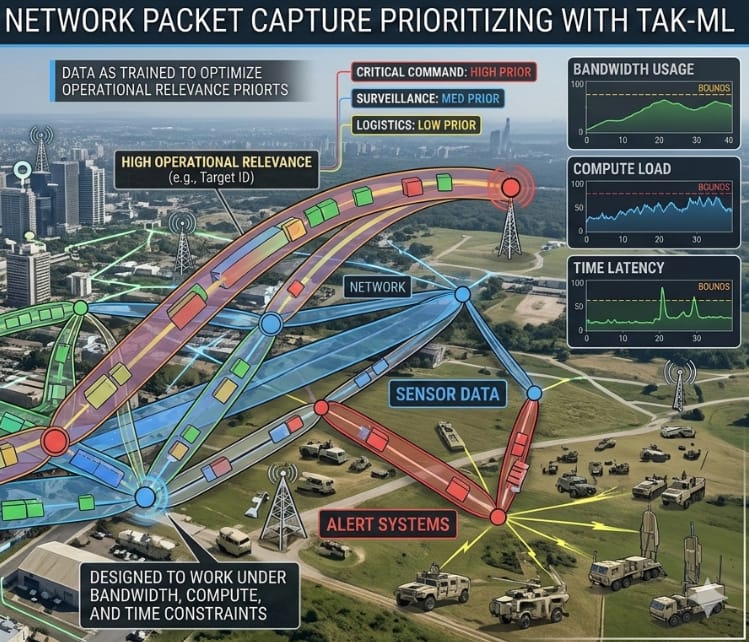

TAK-ML (as people actually use it, not how it’s marketed) is usually shorthand for:

- Task-Aware / Tactical ML

- Models trained to optimize operational relevance, not accuracy

- Fast, online, heuristic-heavy, explainability-light

- Designed to work under bandwidth, compute, and time constraints

Applied to PCAP ingress, that means:

“Can we learn which packets/flows are likely to matter later, and treat them differently before full parsing, storage, and enrichment?”

That is a valid and powerful idea.

The core danger (why this is often ill-advised)

PCAP is raw evidence.

Once you drop packets upstream based on an ML judgment, you introduce:

- Irreversible data loss

- Model bias baked into evidence

- Chain-of-custody contamination

- Unknown unknowns (novel attack patterns look “benign”)

This is especially dangerous for:

- OSINT abuse investigations

- Legal / evidentiary workflows

- Cold-case correlation (your stated long-term goal)

So:

🚫 ML should not be allowed to hard-drop packets at ingress

✅ ML can decide how deeply packets are processed, stored, or surfaced

The safe architecture: “ML-guided attenuation, not deletion”

Think of your PCAP pipeline as layers of fidelity, not a binary keep/drop.

Canonical pipeline (baseline)

PCAP

→ decode

→ sessionize

→ enrich

→ store

→ analyze

TAK-ML-augmented pipeline (safe version)

PCAP

→ minimal feature sketch

→ TAK-ML relevance scoring

→ tiered handling

├── full retention + deep parse

├── summarized retention

├── deferred / compressed

└── discard AFTER TTL (optional)

The key insight:

ML never deletes immediately. It assigns priority.

What features are safe to learn on at ingress?

You must only use non-semantic, non-payload features at this stage.

Safe, low-risk features:

Flow / transport features

- 5-tuple (src/dst IP, ports, proto)

- Packet size stats (min/mean/max)

- Inter-arrival timing

- Burstiness

- Directionality ratios

Protocol surface features

- TLS ClientHello metadata (no cert parsing yet)

- DNS query length / entropy (not content)

- HTTP method + path length (not body)

- JA3 / JA4 hashes (as categorical IDs)

Behavioral signals

- Fan-out / fan-in patterns

- Retry behavior

- Session churn

- Beacon-like periodicity

🚫 Do not use:

- Payload content

- Decoded application data

- User identifiers

- Anything that could later be argued as “selective evidence capture”

What the ML model should output

Not “drop / keep”.

It should output a relevance vector, e.g.:

{

"relevance": 0.83,

"novelty": 0.61,

"risk": 0.22,

"confidence": 0.74

}

These scores then drive policy, not destruction.

Practical policies that are defensible

Here are policies that hold up technically and legally:

1. Adaptive depth parsing

- High relevance → full decode + enrich

- Medium → headers + stats

- Low → flow summary only

2. Storage tiering

- High → SSD / hot index

- Medium → warm object store

- Low → compressed / sampled

3. Deferred discard

- Low-relevance data gets a TTL

- TTL expiry is logged, auditable, reversible in principle

4. Human-triggered override

- Analysts can “rescue” low-scored flows retroactively if a pattern emerges later

This keeps you safe from:

- False negatives

- Future reinterpretation

- Legal challenges

What kind of ML actually works here?

You do not want a heavy classifier.

Best performers in this role tend to be:

Unsupervised / weakly supervised

- Autoencoders (flow-level)

- Isolation Forests

- Streaming k-means variants

- Sketch-based anomaly detectors

Why?

- They surface novelty, not “good vs bad”

- Novelty is what analysts actually care about

Reinforcement-style feedback (optional)

- Analyst actions reinforce relevance scoring

- “I clicked this” / “I ignored this”

- No hard labels required

This aligns well with TAK-ML philosophy.

Where this fits cleanly in your ecosystem

Given your existing stack:

- Suricata / Zeek producing metadata

- Hypergraph / BSG downstream

- QuestDB / CrateDB time-series

- Visualization layers

TAK-ML belongs between packet capture and enrichment, not later.

Think:

PCAP

→ flow sketch

→ TAK-ML scoring

→ metadata stream (always)

→ payload retention (conditional)

Your hypergraph benefits, because:

- Nodes represent meaningful behaviors

- Noise is attenuated early

- You still retain auditability

When it is ill-advised

Don’t do this if:

- You need full-fidelity PCAP for forensics

- Storage is cheap relative to risk

- You’re building ground truth datasets

- You’re early in research and don’t yet know what “relevant” looks like

In those cases, TAK-ML should be read-only advisory at first.

A good compromise (recommended path)

- Phase 1: TAK-ML scores only, no policy impact

- Phase 2: Use scores for UI ranking + analyst attention

- Phase 3: Use scores for storage tiering

- Phase 4: Optional TTL discard with audit trail

If you skip Phase 1 or 2 and go straight to dropping packets, you will regret it later.

Bottom line

- TAK-ML at PCAP ingress is powerful

- Hard-dropping packets is dangerous

- Relevance scoring + attenuation is the sweet spot

- Treat ML as a lens, not a filter

If you want, next we can:

- Sketch a flow-sketch feature extractor

- Design a streaming novelty model

- Integrate relevance scores into your hypergraph

- Or build a “rescue path” for low-scored data

“This is a good question — it shows you’re thinking like a systems engineer, not just an ML user.” – ChatGPT